Sean and Chanel were panelists at Snake River Fandom Con 2018

This was one of their panels, along with Ann Knowlton of the USS Rendezvous, a Star Trek Charity Cosplay Group

What is the difference between consciousness and code? We are going to start from the beginning and work our way to complexity.

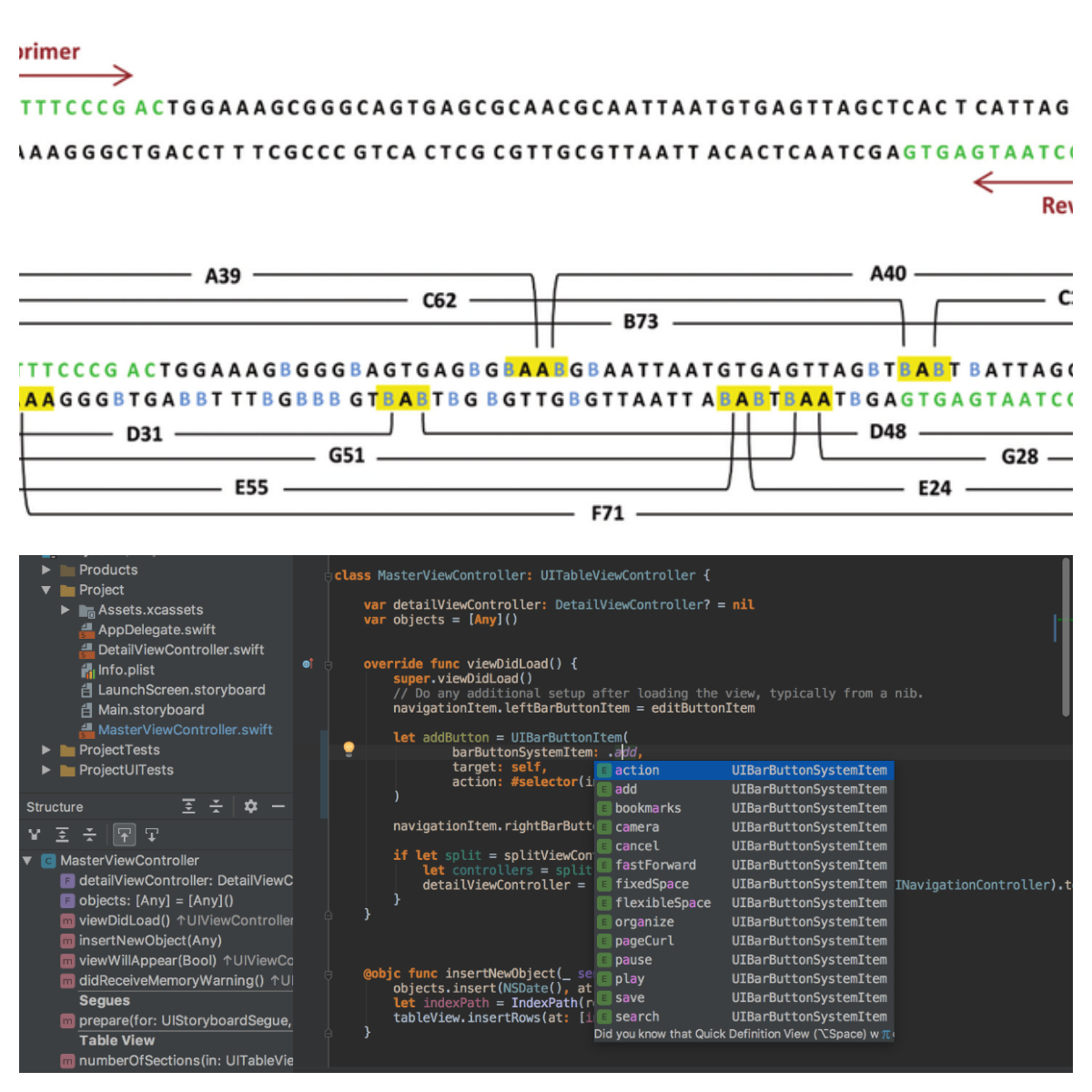

People are created with DNA. DNA is just a list of instructions for your cells to follow, so that they can build and repair the human body. When you look behind a website or app, you see that is is just a bunch of instructions for the HTML to follow, so it can create what you want it to.

Every cell in the human body gets replaced every seven years. Seven years ago, nothing in your body is still a part of your body. Not your brain, lungs, heart, or bones. This happens because your cells die, and your DNA tells your new cells how to replace the dying ones. But you obviously still identify as a human being, just because you aren’t in the same body you were when you were born, doesn’t mean that this isn’t still you.

One day in the future, technology will advance to such a point that you will be able to take your consciousness out of your dying body, and recreate it into a computer, because after all, everything you are, is just a line of instructions. If you can translate it from DNA into Code, then whats the difference?

However, that raises the question, do you actually get to live on, or is there something entirely new with your memories that is born? If we as a society accepted that your consciousness is no longer new, because it was rewritten into a computer, than no one would do it. However, the concept behind many of the episodes of the show Black Mirror is that we accept that this new thing is still you. Even if that’s not the case, how would NewYou know? They have all your memories and mind. Therefore, NewYou would identify as human, even though it is recreated in code. Does a bunch of code that self-identifies as human have rights? It would, because the loved ones of NewYou would defend your rights, because they still love you, because you are imperceptible from TrueYou.

Jumping to the show Westworld, technology has advanced to the point, not where we recreate ourselves into robots, but we have made robots that are imperceptible from regular humans. So much so that the robots themselves self-identify as human. At that point, whats the difference from someone who is recreated in code, and someone who is born in code, especially if you can’t tell. Do they get the same rights as humans, because they are imperceptible from human?

Who Fights for Robo-Rights?

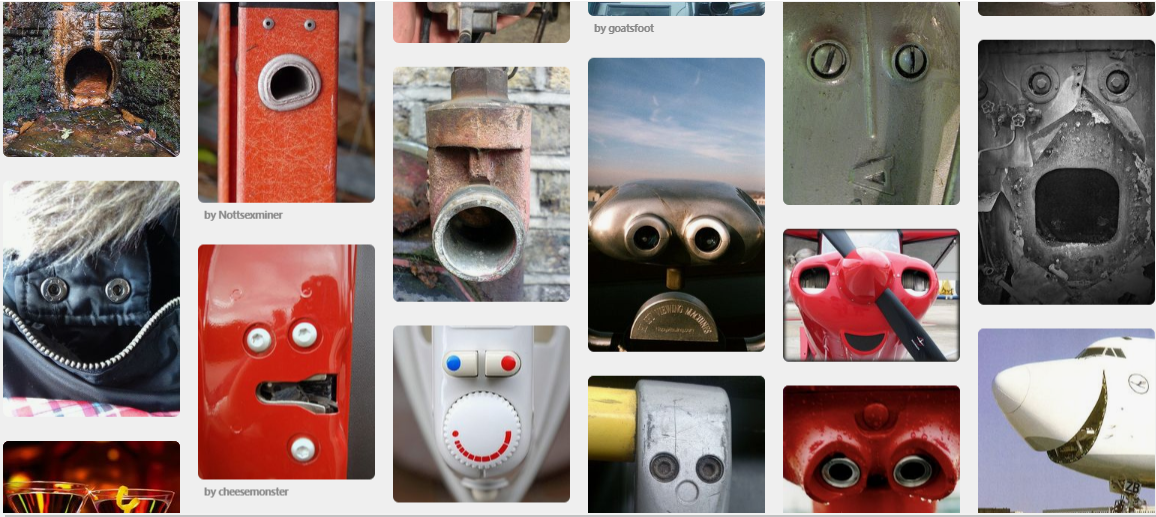

Humans have a tendency to humanize non-human things. On the most basic level, look at these pictures, what do you see?

If you are like most people, you see a bunch of faces in these cars, pipes, and jacket zippers. This is called Pareidolia. This extends beyond faces though.

Georgia Tech even did a study about people and their Roombas. That’s right, the small robot that vacuums your house so you don’t have to. The found out that people name and gender their Roombas, dress them up, and even change the furniture in their house to better accomodate their Roombas.

Not only that, but Roomba has a policy where if your Roomba gets damaged, you can send it in and get a new one. But something strange kept happening. People asked for the same Roomba to get fixed and set back, as opposed to just getting a new one. They have grown an emotional attachement to their Roomba to the point where they feel like it has to be the same one, in the same way that if your dog gets hurt, you don’t want a different dog that isn’t hurt, you want to help heal your dog.

Speaking of dogs and robots, take a look at this awesome robot that looks like a dog!

However, imagine that robot lived in your house. Based on the Roomba study, we can guess that you would start to get an emotional attachment to that RoboDog. Now look at that .gif again, but imagine that you cared for it just like you do a regular pet, again just like the Roomba owners do.

You are going to get upset! You can’t kick my RoboDog! It might be for the same reason you would get upset at someone kicking your car, but chances are it’s going to be closer to someone kicking your actual pet.

Going back to the difference between robots that are programmed to think like humans, and regular humans, RoboDog might get programmed to think like a real dog. Therefore it is going to get upset. Our “Roomba Insticts” are going to kick in, and we will feel pity for our RoboDog because now they are sad they got kicked.

So now RoboDog believes itself to be a real dog, and someone kicks it, well people are going to start arguing for Robo-Rights in the same way they are fighting for animal rights!

I can hear the arguments already:

“Robots Deserve Rights!”

“Robots are things! Things we created, why do they deserve rights?”

“Because they have feelings!”

“Feelings we gave them! They aren’t real!”

“They don’t know that!”

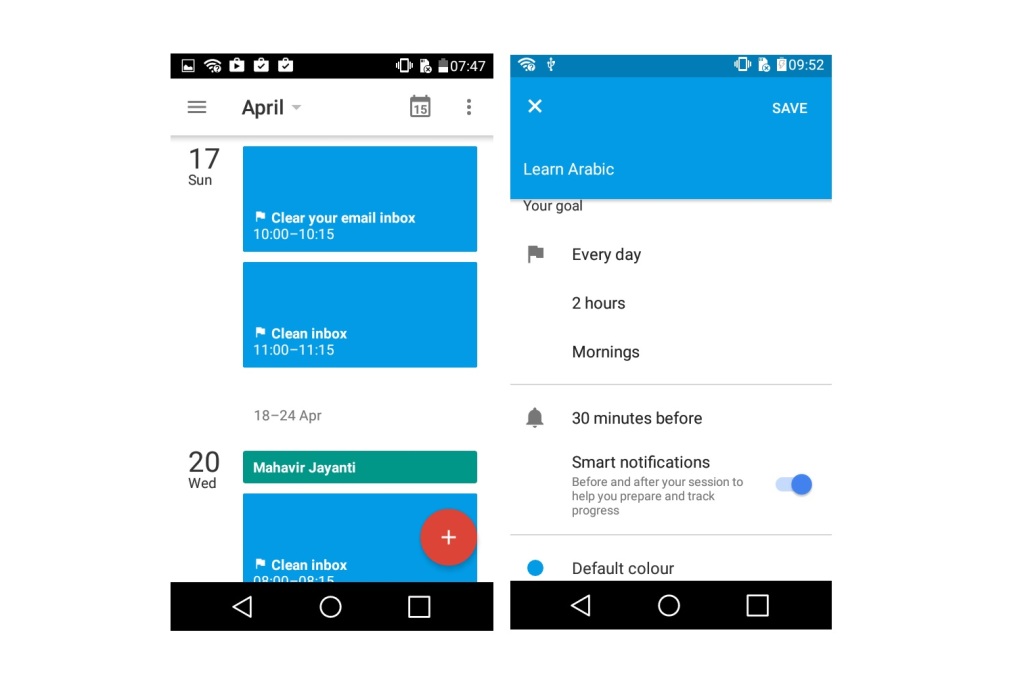

Not only that, but we are already teaching robots to learn. Take a look at something like Google Calendar. As you continue to put appointments, goals, and reminders in, the app starts to learn where in the month, week, and even day you like to do certain things, and it starts to guess when you want to put in your next event. This is learning. When Situation A happens, then usually Situation B happens.

So now back to the RoboDog, someone kicks it and it feels upset about it. It has learned that Situation A (being kicked) leads to Situation B (being sad). If it gets kicked again, it will try to find the pattern, what happened before getting kicked? That’s the new Situation A. Lets say both kicks came from a man wearing jeans. Now RoboDog knows that Situation A (being near a man in jeans) leads to Situation B (getting kicked) which leads to Situation C (being sad).

Going into Pavlov, RoboDog will immediately get sad when it is near a man in jeans. If it is programmed to avoid situations of being sad (which is the concept of fear, which is necessary for survival), then it might try to avoid being near a man in jeans. Now we have a pre-concieved notion. If a pre-concieved notion happens due to a negative experience, we usually call that “Trauma.”

So now we have RoboDog with trauma. If people weren’t fighting for RoboRights before, they sure are now, as we are traumatizing something we have an emotional connection to. In fact, back to Westworld, a common theme is that when the robots are suffering (in trauma), that is when they are closest to being human.

So remember this, when some point in the future you hear the phrase “Robot Rights”, and remember that it really isn’t all that crazy.

All of this basically comes down to two questions.

- What is the difference between consciousness and code?

- Do robots deserve rights?

4 comments